No-Code ETL Tools Everything You Want to Know

No-сode ETL tools help businesses in data analysis and strategic decision-making without requiring tedious code & development efforts.

With tons of data available about your customers, the only thing hindering you from using it for organizational benefit is the CODE. If that defines you and your business, you'll want to learn everything about ETLno-code tools. With this learning, the Extract, Transform, and Load mechanism that expert data engineers utilize will be no alien to you. You will get valuable information about your stakeholders, similar to what data professionals and data engineers would have gathered after years of coding using data science and data integration. Doesn't that sound like a win-win deal? Let's dive in deeper and explore the no-code ETL Tools in detail.

A brief introduction to ETL

ETL, which stands for Extract, Transform, and Load, is an essential process in data warehousing. During this process, data from multiple information sources are converted into one through data integration to provide decision-makers with compelling information to rely upon.

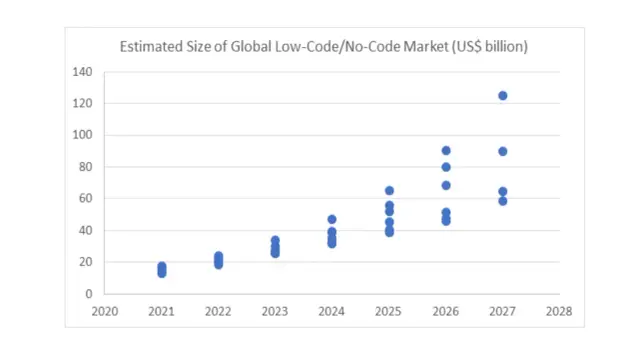

The low-code and no-code development industry is expected to reach a revenue generation capacity of $187 billion by 2030. The increase in revenue yearly is because of the increased adoption of businesses with the no-codeETL technology. Over 75% of companies are expected to adopt these tools and contribute to the data integration industry growth.

The growth in the no-code sector is not specific to the IT industry; instead, half of this sector's growth is expected to come from companies other than the IT sector.

Here's an introduction about each step in the process:

Extracts data- in this step, different data flows used by your company are accessed, and all the data is stored in a single repository after making it viable to be moved between various software and systems for further processing using data science.

Transform - this step requires the data and data warehouse to be cleaned and made efficient for further usage. Some main rules in the transformation process include deduplication, verification, sorting, standardization, and data integration.

Load - Loading involves showing up data at the newer location that could be readily used for the next processes like reporting and decision-making. There can be two main loading mechanisms: full loading and incremental loading. No matter the loading mechanism used, the result is easier data analytics.

What is no-code ETL?

No-codeETL means carrying on the whole extract, transform and load process without any code. It forms the backend of data integration. No-code ETL tools are designed to automate the maximum process, and the users don't have to input any code lines to get it working efficiently. Businesses can use such tools without hiring ETL developers or data experts specifically.

The no-code ETL tools run in the cloud and often have a drag-and-drop interface to facilitate the non-tech user in figuring out the proper way to use it. With these no-code ETL tools, your organization can easily create its own data mart or data warehouse that will eventually impact strategy formation and decision-making.

Types of no-code ETL tools

There are four main types of no-code ETL tools. We'll share briefly about each of these types in this section:

Enterprise software ETL tools

These are the tools developed and supported by commercial organizations. Being the pioneers in the development of no-code ETL processes, these companies have already advanced the learning cover and provided the users of these tools with a graphical user interface, easy-use features, and other features in these tools that allow more accessibility and simpler usage.

However, the price charged in return for all these features is often more than the other no-code ETL solutions available in the market. Large organizations usually prefer such tools for data integration with a steady stream of data inflow and the requirement of a lot of information from data analytics within the data pipelines.

Open source ETL tool

Like any other open source software, open source ETL tools are free to use. They can provide the basic functionality to the users while allowing your organization to find and study the source code. But the features and ease of usage offered by these tools differ significantly.

So, selecting manual ETL might require you to keep an in-house developer to tweak the basic code specifically for your organization if you don't want to rely on the basic features only. However, open source ETL allows higher customizability than any other ETL tool type.

Cloud-based ETL tool

With the prominence of cloud-based technology, ETL tools are also available with this form of working. By using cloud technology, you can expect high latency, availability of resources, and elasticity. It lets the computing resources scale and meets the organizational demands. But one of the problems with cloud data platforms is that they only work within the cloud server's environment.

Custom ETL tool

The last type of ETL tool includes the custom version. They are designed by large companies using in-house software development teams. They can be personalized to the requirements of the organization. Some computer languages that might help create this software include SQL, Python, and Java.

The problem with these tools is often the cost and the excessive requirement of the resources. Creating, testing, and maintaining these tools all require time and constant upgradation of the process. So, you must be ready to set aside a specific budget for the custom ETL tools.

Scope of ETL tools

The trend of using ETL tools has been significant over the past few years. Initially, the ETL processes were only being handled through the manual approach, where data scientists were hired to do the entire data integration process.

But with the introduction of no-code tools by powerful software and development houses, the ETL tools have become significant. It is expected that the market for the no-code market will increase by 40% yearly, reaching $21.2 billion by the end of 2022. So, there is a significant market share of these no-code ETL tools.

How does manual ETL work?

The manual ETL processes require data analysts' data science and architecture to execute the process. There is no automation, and every step must involve coding and expert supervision. Moreover, you must expect long work hours for each step in the process. This extra time is not only required as a one-time effort, but it needs to be done every time for all the data sources, thus enhancing the overall work involved. Besides, more work hours from the data engineers means higher costs at your end.

The developers create pipelines in the manual process of extracting, transforming, and loading data. The more the data range and data warehouses, the more time and human resources are required. Similarly, the data integration process needs more coding to get it up and to run.

Broadly, the following are the main processes that a manual integration of data will require to be performed:

- Documenting the requirement and outlining the entire process is the first step.

- Developing data integration and data warehouses and models for all databases from where the information needs to be extracted.

- Coding a pipeline for each data source that links the entire data set to the data warehouse.

- Rerunning the whole process to ensure everything is perfect.

- The substep for each task is different for all data flows because of the nature and data format. This makes the process complex and time-taking.

What is the difference between manual ETL and no-code ETL?

Conducting manual ETL processes and using the no-code ETL tools are very different. The latter is, without a doubt, a challenging and complex process. This section highlights other domains where the manual data code process differs from the tool usage:

Usage

The ease of usage that no-code ETL tools can offer is beyond imagination. They already have the set process for extracting unstructured data, performing the transformation process, and loading it to the clean repository. So, you don't have to do much except for providing the locations for the data pipelines.

However, manual completion of the process isn't easy even for advanced data experts, as it requires a long process to get hold of the valuable information from the data. Besides, there is a chance of error in the coding that can ruin the entire data integration process.

Maintenance

The maintenance of manual ETL code is challenging. You'll have to master multiple computer languages to get a good command over the entire process. You might have to hire experts in all these languages or resources who can get the work done using limited variations.

Besides, there will be multiple scenarios of data integration for which you might need to perform the process. So, the process will have to be redone for each new type of information required. However, this will not be the cause of concern for your organization when opting for no-code ETL solutions. These tools do not require you or your team to be an expert in computer science for maintenance; anyone can do it.

Cost

From the cost perspective, no-code ETL solutions will prove to be a better option because there is a predefined subscription cost involved with using these tools, which isn't expensive considering the value you get in return. But hiring a data scientist will require a lot of investment. As the yearly remuneration of a developer is over $100,000, you'll also need to invest in others who might not be experts but must know the ETL processes to facilitate the data scientist. Similarly, specialized hardware would also be essential, increasing your costs further.

Performance

In terms of performance, manual ETL coding definitely gets the upper hand. It is because you can get a customized process based on your organizational needs. You can reduce or increase the data sources, putting your own rules during the transformation process. All these activities aren't possible with no-code ETL tools. These no-code ETL solutions are already based on a predefined code, which runs the process as defined. Thus, the overall performance of results might vary slightly.

Scalability

The ETL tools tend to be scaled depending on organizational data sources and requirements changes. So, there won't be much if you go big in the future. However, using the manual process of data integration will require you to create even more extensive lines of code to get the result.

Workflow automation

ETL tools also allow workflow automation because the data will be extracted, transformed, and loaded into the required repository based on when and how you have scheduled the process. All this information is usually acquired from data warehouses. You won't have to perform every step of the data pipeline through coding. In the case of a manual ETL process, all the databases and repositories will have to be manually attached with an extensive code to conduct the entire process.

Use cases

The no-code ETL tools are perfect in a situation when you have extensive databases with excessive coding work required. However, if your databases are not much developed or your required information is not urgent, you might choose manual ETL. However, even in that case, you must be willing to write extensive lines of code.

Data sources

Another difference between manual ETL and no-code ETL is the number of data sources. However, you can use these methods for any number of data sources. But the lesser the number of data sources, the less complexity of the process will be lesser in the case of manual ETL. The no-code ETL tools let you connect any number of databases without any additional coding required.

Technical advancement

To upgrade or change the current data map or pathway of conducting ETL, no-code tools can be of great help. You'll have to redo the entire coding process for a newer code with a manual coding process. If you would have chosen open source ETL tools, making adjustments according to your needs or customizing it will get even more complex.

No-code ETL: how it helps you?

No-code ETL solutions can be helpful for your business because they can work without coding. With a typical no-code ETL, you can use a simple user interface tool to create a data map to present the pathway to the server. Then, the server can run the entire process on automation without requiring further assistance from you.

Adding transformation rules is also one of the ways ETL can help you. Cleansing, re-structuring, separating, or removing data sets is possible to ensure the provision of updated and relevant information. Checking the data quality of the extracted data is also possible by applying some simple rules to the process.

You can schedule the entire ETL process, so running it manually wouldn't be required to achieve the updated data sets and information for strategic decision-making. Besides, the visual, intuitive, and user-friendly interface ensures that everyone can utilize these ETL tools to save time, enhance productivity and get better results.

How it works: data import and drag-and-drop workflows?

While working with the no-code ETL processes, you'll encounter many scenarios in which the ETL tools are helpful. These include:

-

Connectors

If you have different data pipelines, you can easily connect them without adding any line of code. For example, if your customer's data is stored in Oracle while the order information is in Microsoft Excel, the tool will connect to these data warehouses.

-

Data profiling

You'll have to define the data to get the most out of it. The ETL processes can allow you to enter data variables like types, integrity, and quality. Based on the defined values, the data will be automatically sorted.

-

Pre-Built transformation

There can be built transformations available in the ETL software that can directly be applied to the raw data, making things a lot easier for you.

-

Convenient scheduling

You can schedule the ETL pipeline with specific triggers so that things remain automated, and you won't have to put in an apparent effort at a particular time.

Best ETL tool for businesses - AppMaster

One of the best no-code ETL tools is AppMaster. It can automate the entire process of extracting, transforming, loading, and data validation.

Create data pipelines with AppMaster

Open source ETL Tools might not be helpful in every situation. You need specialized software to create pipelines that extract data and shift the manual ETL into an automated data architecture. You can surely start your organizational data extraction and data integration journey with open source ETL tools. Still, you'll need specialized software that contains all the required functionalities to create a seamless data pipeline, which ultimately helps in data preparation and data analysis. AppMaster is software that can fit your needs fully.

Implement the best practices of data warehousing

With AppMaster, you can expect to use the PostgreSQL database to integrate data, load data, and convert it to the format where it can help you make important decisions for your organization. All these aspects can be covered through manual ETL mechanisms; however, with AppMaster by your side, you can manage data integration without requiring coding.

Integrate data sources

You'll just have to integrate different data structures your organization uses in the manual ETL and let the tool perform its operations. The result of data integration will be the information you require to proceed with important decisions. As compared to the manual ETL tools, the data integration processes can be managed in relatively lesser time. You don't have to compromise on data quality or other factors.

Offers easy to use interface

AppMaster is specifically designed to offer an easy-to-use interface while helping you extract the data. With different dashboard facilities for different stakeholders, aligning the information you need becomes much more accessible. Access to the correct information at the right time helps in better decision-making. Besides, such an interface saves time and offers all the essential data on a single screen.

Why is no-code ETL better than hand-coded ETL?

No-code ETL tools can provide an easy solution for managing the data in a way that can bring in more opportunities for growth for your business. You don't need ETL developers to perform the ETL process, which makes things a lot easier, user-friendly, and cost-effective.

With multiple benefits associated with the no-code ETL tools, these tools are the new reality in the business world, especially for companies with tons of data. From multiple open source ETL tools available, your reliance must be on the platform that offers advanced features with easy usages, like AppMaster.