Discovering Auto-GPT: The Future of Automation with OpenAI's Models

Auto-GPT utilizes OpenAI's AI models to autonomously perform tasks, interact with software and online services, and streamline multi-step projects. While offering numerous benefits, this powerful tool also has limitations and risks surrounding its implementation.

The continuous pursuit of automation in Silicon Valley has led to the development of an innovative open-source application called Auto-GPT, providing users with an autonomous experience and leveraging OpenAI's advanced AI models such as GPT-3.5 and GPT-4.

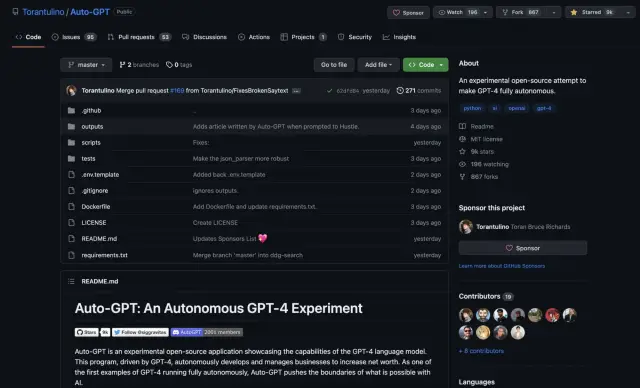

Auto-GPT captivated attention on social media, showcasing its capability to execute tasks by interacting with various applications, software, and services, both online and locally. The Auto-GPT app, developed by game developer Toran Bruce Richards, succeeds by automating multi-step projects that required a series of interactions with chatbot-oriented AI models like OpenAI's ChatGPT.

By pairing GPT-3.5 and GPT-4 with a companion bot, Auto-GPT receives instructions from users and subsequently employs several programs to accomplish their goals, greatly improving the automation process across different platforms.

Software developer Joe Koen, who has experimented with Auto-GPT, explains that users can input their objectives and goals into Auto-GPT, which then communicates with OpenAI's API. The AI generates responses to guide the Auto-GPT agent through the required commands, completing tasks without user intervention.

The versatile functionality of Auto-GPT relies on features like memory management for task execution and GPT-4 and GPT-3.5 for text generation, file storage, and summarization. It can even connect to speech synthesizers, enabling the AI to make phone calls, for example.

However, to utilize Auto-GPT, users must install it in a development environment like Docker and obtain an API key from OpenAI, requiring a paid account. Despite this, early adopters have found Auto-GPT invaluable in handling mundane tasks such as debugging code, writing emails, or even devising business plans for new startups.

Adnan Masood, Chief Architect at tech consultancy firm UST, highlights that while large language models excel in generating human-like responses, they also require user inputs and interactions to deliver the desired outcomes. In contrast, Auto-GPT operates independently, taking advantage of OpenAI's advanced API capabilities.

Recently, new applications like AgentGPT and GodMode have emerged to simplify Auto-GPT's usage, providing users with an accessible interface through a web browser. Nonetheless, these tools still necessitate an API key from OpenAI for full functionality.

Despite its powerful capabilities, Auto-GPT comes with limitations and risks. The tool may exhibit unpredictable behavior, depending on the objectives provided. Additionally, its reliance on OpenAI's language models for task completion means it may produce inaccuracies and errors. Furthermore, Auto-GPT struggles to recall previously completed tasks and often fails to remember the appropriate programs to use for similar tasks in the future. It also faces difficulties in breaking down complex tasks and understanding overlapping goals.

Clara Shih, CEO of Salesforce's Service Cloud and an Auto-GPT enthusiast, emphasizes the importance of integrating a human-in-the-loop approach for enterprises employing generative AI technologies like Auto-GPT. This helps mitigate the risks and maximize the potential of Auto-GPT's capabilities in a safe and efficient manner.

As the no-code landscape continues to expand, innovative platforms like AppMaster offer solutions that enable users to build sophisticated backend, web, and mobile applications with ease. Like Auto-GPT, AppMaster also empowers users to develop and manage projects, contributing to a more streamlined and efficient workflow in various sectors.