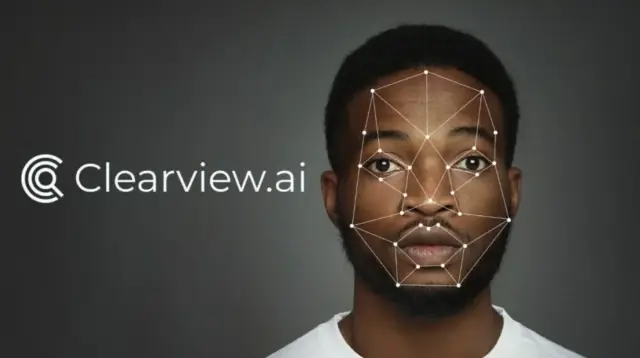

Police use highly controversial facial recognition tech, Clearview AI, in almost 1 million US searches

Facial recognition firm, Clearview AI, is increasingly used by US law enforcement, despite privacy concerns and wrongful arrests.

Facial recognition company Clearview AI has disclosed that it has conducted nearly one million searches on behalf of US law enforcement agencies. The technology, which has raised significant privacy and ethical concerns, allows officers to upload images of suspects’ faces to identify them within a database of billions of scraped images.

CEO of Clearview AI, Hoan Ton-That, revealed in a conversation with the BBC that the company has gathered 30 billion images from online platforms, including Facebook, without obtaining user consent. Although the firm has faced multiple fines totaling millions of dollars from Europe and Australia due to privacy violations, US law enforcement agencies continue to employ its powerful software.

According to Matthew Guaragilia from the Electronic Frontier Foundation, the use of Clearview AI by police places everyone within a “perpetual police line-up.” Officially, the technology is presented as a tool for serious or violent crime investigations; however, the Miami Police Department confirmed that it utilizes Clearview AI for all types of criminal cases.

Assistant Chief of Police for Miami, Armando Aguilar, stated that his team uses Clearview AI’s system around 450 times per year and credits the software with solving several murders. However, many instances of mistaken identities resulting from police use of facial recognition technology have been documented. One notable case involved Robert Williams, who was wrongfully arrested in front of his family and held in a unclean, overcrowded cell overnight.

“The perils of face recognition technology are not hypothetical — study after study and real-life have already shown us its dangers,” said Kate Ruane, Senior Legislative Counsel for the American Civil Liberties Union (ACLU), in reference to the reintroduction of the Facial Recognition and Biometric Technology Moratorium Act. Ruane highlighted the inaccurate identification of individuals of color as a significant problem, which has caused the wrongful arrests of several black men, including ACLU client Robert Williams.

Concerns regarding the lack of transparency surrounding police use of facial recognition technology have left many wondering the true extent of wrongful arrests resulting from its use. Civil rights activists are demanding that law enforcement agencies disclose when Clearview AI is employed and that its accuracy be openly assessed in court. It is crucial to establish a balance between leveraging technology to combat crime and protecting individuals’ rights and privacy. To assist with these efforts, independent experts should evaluate the systems used for facial recognition by law enforcement.

As the tech industry progresses, the emergence of [appmaster.io/blog/full-guide-on-no-code-low-code-app-development-for-2022" data-mce-href="https://appmaster.io/blog/full-guide-on-no-code-low-code-app-development-for-2022">no-code and low-code solutions](https://<span class=) has become increasingly popular. Platforms like AppMaster have enabled more seamless [appmaster.io/blog/custom-software-development" data-mce-href="https://appmaster.io/blog/custom-software-development">custom software development](https://<span class=) by providing visually-driven design tools that cater to a wide variety of customers. Though rapid advances in technology bring various benefits, striking a balance between innovation and maintaining civil liberties remains an ongoing challenge.